- #PENTAHO DATA INTEGRATION INSTALL JDBC DRIVER HOW TO#

- #PENTAHO DATA INTEGRATION INSTALL JDBC DRIVER DRIVER#

- #PENTAHO DATA INTEGRATION INSTALL JDBC DRIVER SOFTWARE#

- #PENTAHO DATA INTEGRATION INSTALL JDBC DRIVER PASSWORD#

You will need to configure two databases to get pentaho up and running a "Hibernate" database and a "Quartz" databaseĮcho "create database hibernate " | mysql Įcho "grant all on hibernate.* to | mysql Įcho "set password for = password('') " | mysql

So the download is okay as long the instructions to 'Run ETL Job" below is written for full_reload.sh.Ĭonfigure Pentaho database settings and install ETL However, Sumit has set up the hosted MFI to use etl_build_prod.sh with cron. They probably aren't needed as running full_reload.sh (with proper arguments works for me). John W: These 3 sh files aren't in the 'download' zip (they are under the git bi directory however). Unzip -d $PDI_HOME mifos_bi-1.3.0.zip etl_build.sh etl_build_prod.sh ppi_build.sh Unzip -d $PDI_HOME mifos_bi-1.3.0.zip ETL/*Įxtract the files etl_build.sh, etl_build_prod.sh, ppi_build.sh to $PDI_HOME: Sudo apt-get install sun-java6-jdk libmysql-java Install Data Integration/ETLĮxtract it to a location we will call $PDI_HOME (the root of the Pentaho Data Integration) Get Mifos BI 1.3 Sudo add-apt-repository "deb lucid partner" Install Process Install base os dependencies /etc/pentaho/reports/CDFReportingTemplate./etc/pentaho/system/pentaho-cdf/resources/style/images/*.jpg.reports/CDFReportingTemplate/template-dashboard-mifosreports.html.Remove existing files/dirs before following below Install Process:.The existing Mifos application database (mifos) will be the SourceDB. The Mifos Data Warehouse database (mifos_dw) will be the DestinationDB which will be created by running the ETL script later on.

#PENTAHO DATA INTEGRATION INSTALL JDBC DRIVER HOW TO#

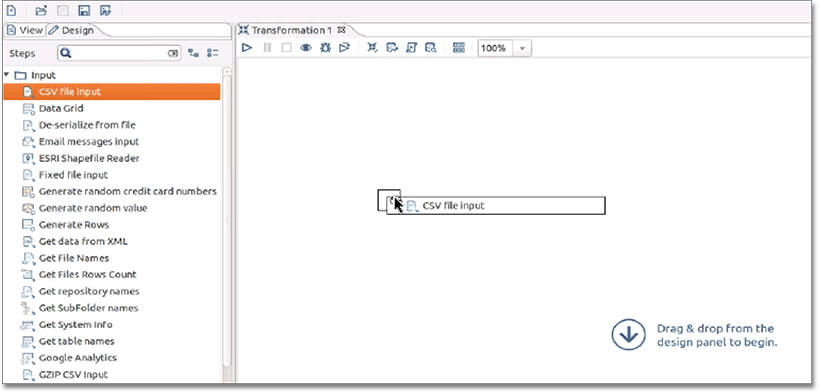

This document will show you how to first setup and configure Pentaho Data Integration to run ETL scripts necessary for some BI and reporting (PPI data export and reports which show a "Data for this report last updated on at 18:58:04" type message when entering parameters) . It also continues to show you how to setup the Pentaho business intelligence J2EE web application in an existing tomcat instance. Mifos BI 1.3 works with Mifos 2.0 and above. Note: these tools can also be run on Windows, but instructions and scripts are currently provided only for Ubuntu.

Pentaho Data Integration Community Edition 4.0.0.

#PENTAHO DATA INTEGRATION INSTALL JDBC DRIVER SOFTWARE#

: Problem encountered determining query fields: .:Ĭouldn't get field info from Īt .ui.$1.run(GetQueryFieldsProgressDialog.java:73)Īt .ModalContext$n(ModalContext.java:113)Ĭaused by: .:Īt .(Database.java:2323)Īt .(Database.java:2240)Īt .(Database.java:1818)Īt .ui.$1.run(GetQueryFieldsProgressDialog.java:65)Īt .a(IfxSqli.java:3204)Īt .E(IfxSqli.java:3518)Īt .dispatchMsg(IfxSqli.java:2353)Īt .receiveMessage(IfxSqli.java:2269)Īt .executeStatementQuery(IfxSqli.java:1428)Īt .executeStatementQuery(IfxSqli.java:1401)Īt .a(IfxResultSet.java:204)Īt .executeQueryImpl(IfxStatement.java:1192)Īt .executeQuery(IfxStatement.java:186)Īt .(Database.In order to run Mifos BI, you need a server running ubuntu 10.04 LTS with the following software installed: The application works fine when selecting a table without the new data types.

#PENTAHO DATA INTEGRATION INSTALL JDBC DRIVER DRIVER#

I updated the driver to the new version in the Spoon ~/libext/JDBC directory but find that the Spoon Application will not read a table or show the meta-data with any table that has the two new data types and throws the following exception. When I used the proceeding versiion of the driver, I could not do selects on any table with the two new data types or view the meta-data of that table. I use a SQL Tool Called iSQL-Viewer and when I use the new driver, I can connect to my server and perform SQL, look at the meta-data with no problems. The new JDBC Informix Driver now supports two new data types in Informix BIGINT and BIGSERIAL.